Technology

Europe’s Groundbreaking AI Regulations: Shaping Tomorrow’s Digital Landscape

Highlights

- The European Union (EU) has reached a historic agreement on comprehensive regulations for artificial intelligence (AI), positioning itself as a global leader in AI governance.

- The deal mandates stringent transparency measures for AI models like ChatGPT before they can enter the market.

- Governments are granted authority to use real-time biometric surveillance in public spaces under specific circumstances, but the agreement prohibits unethical AI practices.

- Consumers now have the right to launch complaints, and fines for violations range from €7.5 million to €35 million, emphasizing the seriousness of adherence.

- Businesses express concerns about added burdens, while privacy advocates criticize what they perceive as lukewarm measures against biometric surveillance.

- The legislation, expected to take effect next year pending formal ratification, could become a global model for AI rules.

- The EU’s move demonstrates its commitment to responsible and transparent AI practices, setting a precedent for the digital future.

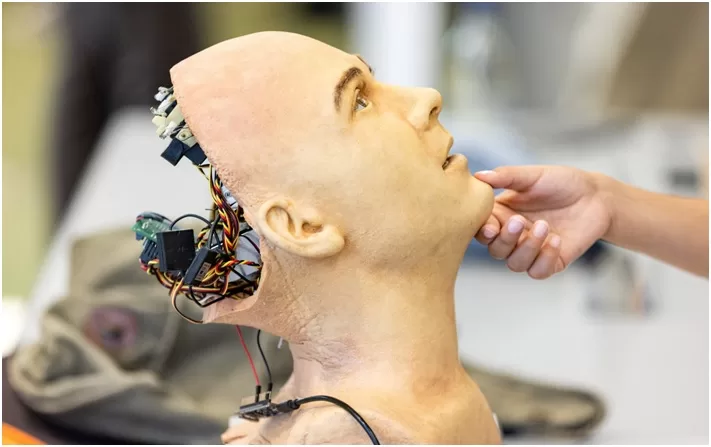

In a groundbreaking move, the European Union (EU) has inked a provisional agreement on comprehensive regulations to govern the use of artificial intelligence (AI), catapulting it into the forefront of global AI governance. The accord, following marathon negotiations, sets the stage for the EU to become the first major world power to establish robust laws overseeing the ever-evolving realm of AI.

The deal, which requires foundation models like ChatGPT to adhere to stringent transparency obligations before hitting the market, marks a pivotal moment in the digital era. As the EU positions itself as a trailblazer, Commissioner Thierry Breton hails it as a “historical day” and underscores the region’s role as a global standard-setter.

Foundation models, including the likes of ChatGPT, and general-purpose AI systems are now mandated to comply with transparency measures. This encompasses the preparation of technical documentation, adherence to EU copyright law, and the dissemination of detailed training content summaries. High-impact foundation models carrying systemic risks face additional scrutiny, necessitating model evaluations, risk assessments, adversarial testing, and adherence to stringent cybersecurity protocols.

Governments are granted the authority to use real-time biometric surveillance in public spaces, but only under specific circumstances, such as responding to imminent threats or seeking individuals suspected of serious crimes. However, the landmark agreement puts a stop to practices like cognitive behavioural manipulation, untargeted scraping of facial images, and the use of biometric categorization systems to infer personal attributes.

Consumers are empowered with the right to launch complaints, ushering in an era of increased accountability. Fines for violations, ranging from €7.5 million to €35 million, underscore the seriousness with which the EU views adherence to these regulations.

As the EU navigates the complex dynamics of AI governance, businesses, represented by DigitalEurope, express concerns about added burdens. Meanwhile, privacy rights advocates, such as European Digital Rights, lament what they perceive as lukewarm measures against biometric surveillance and profiling.

This legislation, set to take effect early next year pending formal ratification, is poised to shape the global narrative on AI regulations. With governments worldwide seeking to strike a balance between technological advancement and ethical considerations, the EU’s approach could become a blueprint for others, offering an alternative to the light-touch approach in the United States and China’s interim rules.

In the ever-evolving landscape of technology, the EU’s historic move stands as a testament to its commitment to pioneering responsible and transparent AI practices, setting a precedent for the digital future.